As software engineers, we architect and develop many real-world applications solving many business problems, and in those applications, we add business logic to solve various issues. Often this business logic is difficult to implement in application code and business rules keep changing which is hard to maintain.

Why use a Rules Engine?

A rules engine may be handy in some instances where the business logic keeps on changing. There is no easy solution to implement the logic in the code, the code becomes cluttered or maybe the business analysts want an easy way to maintain the business rules. Apart from these, the rules engines implement rules execution algorithms such as the Rete algorithm which are more efficient than the traditional if..else or switch statements.

Rules engine is a software component which provides an alternate way to execute business rules. For more information on Rules engine see Rules engine

Rete algorithm efficiently applies the system rules on the input objects or facts. For more information on the Rete algorithm visit Rete algorithm

The Problem

At one of our recent customer's locations, we were tasked to solve a problem wherein the application has to look into more than a hundred different scenarios to determine a result. Also, the customer wanted to have a provision to maintain the rules separately and be able to tolerate changing requirements so that the main applications do not have to be re-deployed whenever there is a rule change.

The Solution

We decided to use a centralized rules execution engine based on JBoss Drools (KIE execution server) to make the rules engine a loosely coupled application, to maintain the rules outside of the business application, and to make the rules engine available to other applications in the organization which have similar use cases.

KIE execution server can be used to instantiate and execute rules via REST, JMS or Java client-side applications. It supports runtime updates to the rules.

Architecture

The first version of the rules engine is designed to adhere to the customer's infrastructure, ability to use the customer's source control repository, security standards and CI/CD process. The solution is designed to auto-recover in-case of rules engine restarts/crashes.

Deployment Architecture

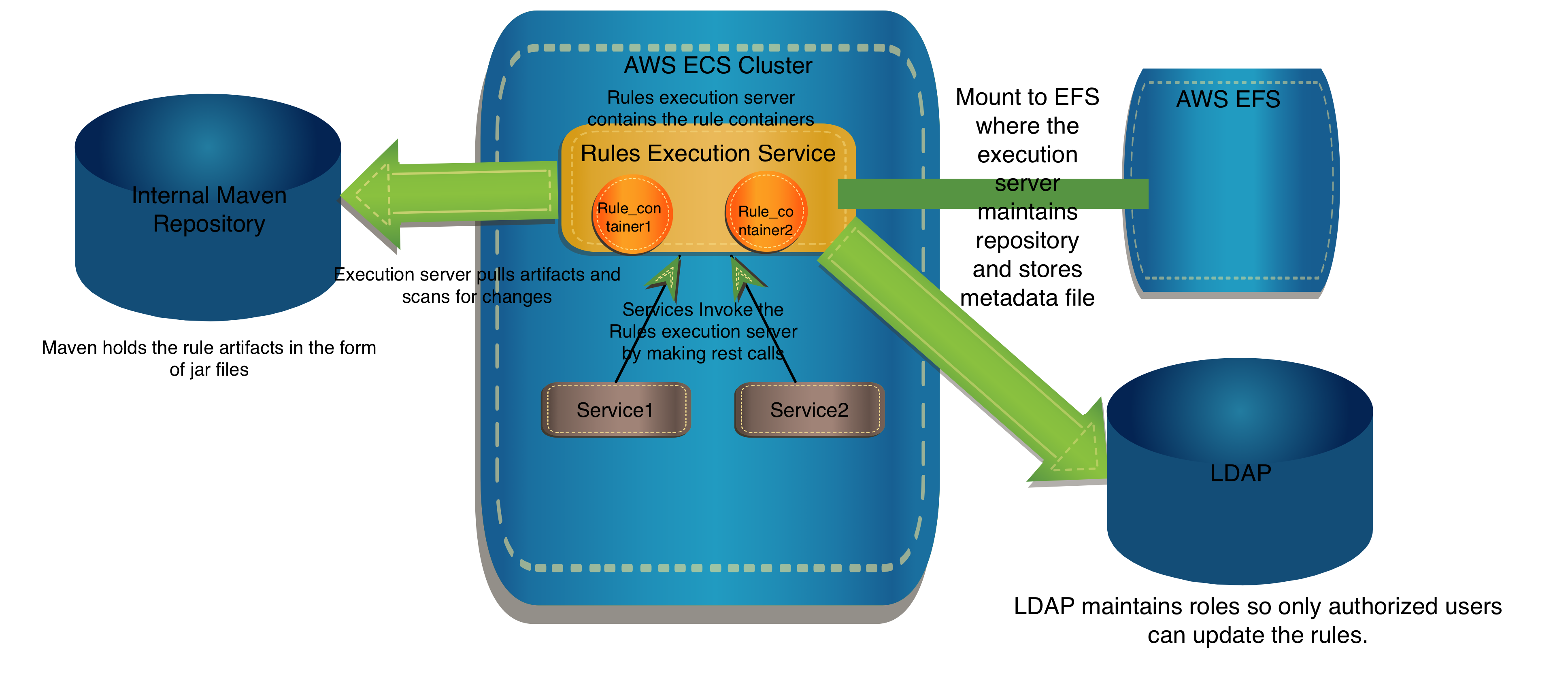

The rules engine is deployed into the existing AWS ECS infrastructure as a Spring Boot application. The service has access to the AWS EFS service so that it can store its state in the metadata file on the network so that it can recover from unexpected restarts.

The service has access to the internal Maven repository so that it can access the rules artifacts and load them as KIE Containers. Each KIE Container represents one set of rules maintained by a project team.

The service has access to the customer's LDAP to support security and authorizations, so that only the authorized users have access to update the rules.

Development Architecture

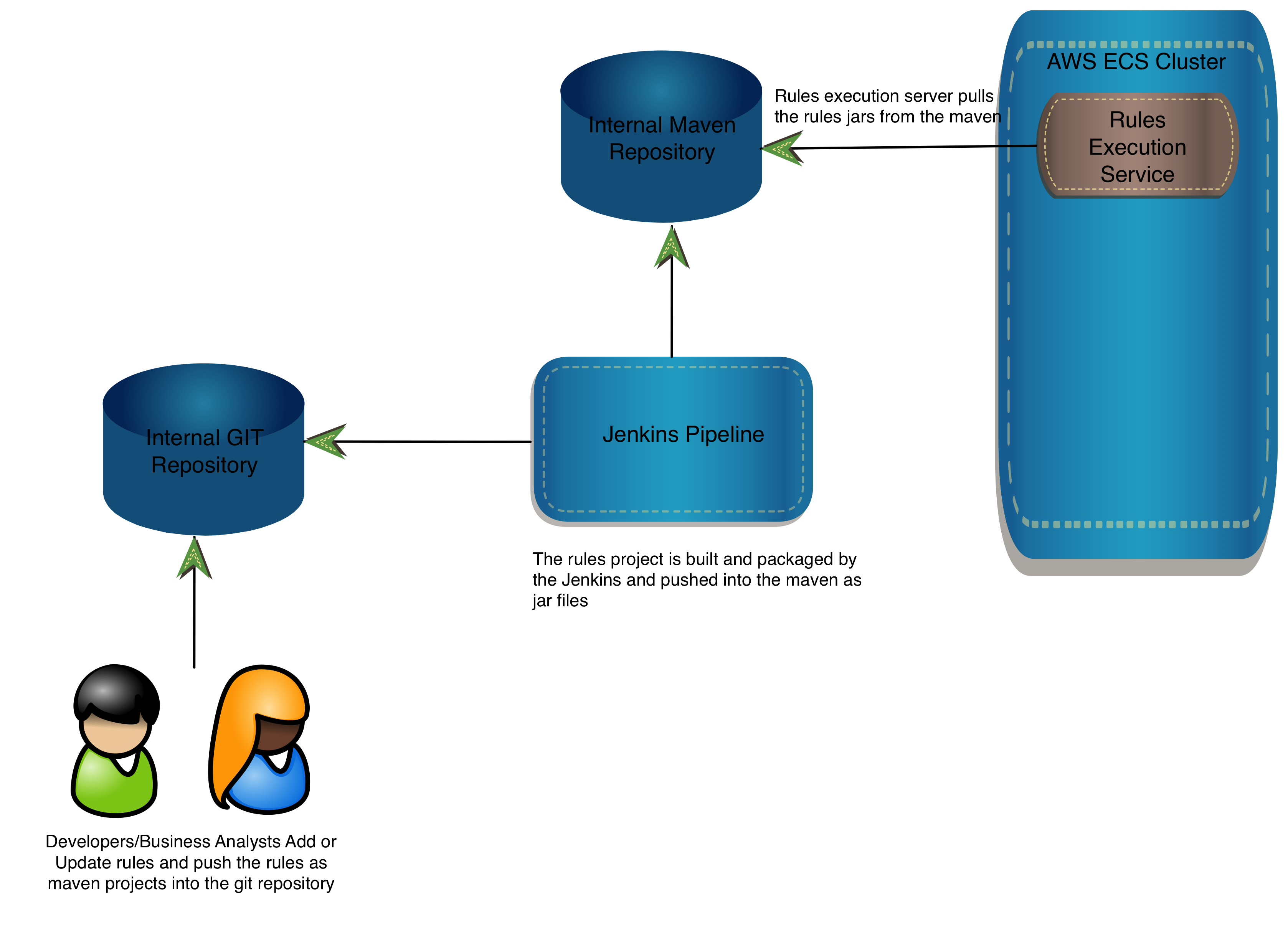

Each development team is responsible for maintaining their rules. These rules are maintained in the Git repository as Maven projects. The project contains the rules files in .drl or .xls formats along with any dependencies which are maintained in the pom.xml such as POJO's used in the rules, or any other utilities used in the rules files.

The project is configured to be built and packaged using the existing Jenkins pipeline into a jar file. The built artifact is then pushed into the internal Maven repository and complies to the existing release management process.

Implementation

The base Spring Boot application is generated by JHipster so that we can take advantage of all the features that come with it. For more information on JHipster visit JHipster. JHipster is already standardized at the customer location to support existing infrastructure.

The KIE Spring Boot starter project is included as a dependency in the pom.xml and other supporting dependencies are added accordingly.

<dependency>

<groupId>org.kie</groupId>

<artifactId>kie-server-spring-boot-starter-drools</artifactId>

<version>7.15.0.Final</version>

</dependency>

Configuration changes in application.yml

kieserver:

serverId: CustomerRulesEngine

serverName: Customer KIE Server

restContextPath: /rest

drools:

enabled: true

dmn:

enabled: true

jbpm:

enabled: false

jbpmui:

enabled: false

casemgmt:

enabled: false

optaplanner:

enabled: false

location: http://${server.address}:${server.port}${cxf.path}/server

cxf:

path: /rest

jbpm:

executor:

enabled: false

The execution server is configured to use the custom Maven settings file and custom repository to store metadata with the following system properties:

kie.maven.settings.custom = /usr/kieserver/settings.xml

org.kie.server.repo = /mnt/efs/kie/repository (this is the mount on the AWS EFS)

Improvements

The future versions of the application will enable scaling of the rules execution server and have a front-end router application to route the rule execution requests and support the rules workbench for creating or modifying the rules.

Comments